AI Governance's Dirty Little Secret

Compliance isn't what improves patient outcomes.

Tom Barrett

3/27/20262 min read

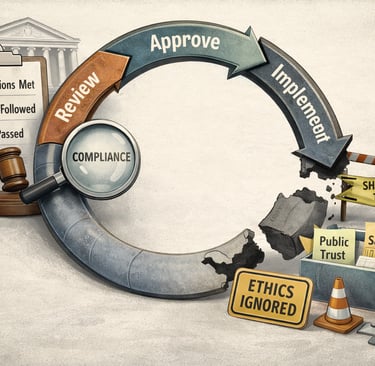

There's a lot of well-deserved concern with creating AI systems that are compliant with regulations. The accompanying questions with that concern reveal where the biggest implementation gaps exist. Who owns compliance? Who validates compliance? Who is responsible when the system is found to be out of compliance?

The biggest quality gap in AI implementations does not exist in deploying non-compliant systems, though. That gap exists between ethics and compliance.

Ethics in AI governance is crucial for ensuring fairness, transparency, and accountability in systems that increasingly shape healthcare, clinical data validation, and decision-making processes.

The rapid pace of AI advancements outpaces governing legislation by months, up to a year, creating a dangerous lag where ethical risks, like bias amplification or privacy breaches, proliferate without enforceable safeguards. This is seen in fragmented state-level regulations versus federal delays.

This gap forces organizations to proactively adopt voluntary ethical frameworks and soft law principles, bridging the void until comprehensive hard laws catch up and prevent governance by default through courts or government bureaucracies.

Say you operate in a rural community as a hospital serving an under-serviced county. Is the AI going to toss out the patient EHRs from that county because the low demographics count makes the records look like outliers? What happens when you have patients at higher risk for diabetes due to demographic statistics, and their population doesn't match the demographics database, so the AI decides it's junk?

This is where compliance falls short and ethics are so important to ensure patients continue to receive the care they need. No one wants a patient to be overlooked for a follow-up or a medication update because they live on the wrong county road or have a demographically small condition.

The same can be said for privacy breaches. AI is notorious for not catching HIPAA violations before they happen. It's so easy to match a name with a date, and heaven forbid an MRN is included in the data that might be exposed. Red teams catch a lot of cases, but in the matter of clinical quality, just like in software quality, what matters are the cases that exist on the edges, the cases where you don't expect a problem.

A clinical AI system designed for diagnostic imaging analysis might create a HIPAA violation by transmitting unencrypted PHI to a non-HIPAA-compliant cloud server for real-time processing without a Business Associate Agreement (BAA) in place. This unauthorized disclosure exposes sensitive data to third-party vendors who could store, reuse, or even train their models on it, triggering breach notification requirements and fines up to $50,000 per violation.

Without proper encryption, access controls, and vendor vetting, such implementations risk amplifying breaches across thousands of records during automated workflows. Human foresight prevents HIPAA violations in clinical AI implementations through proactive risk assessment, rigorous vendor vetting, and "human-in-the-loop" oversight protocols.

Some of the prevention steps that human AI governance provides are:

Conduct pre-deployment audits to verify encryption standards

BAAs with AI vendors

Data flow mappings (flagging unencrypted PHI transmission to cloud servers before activation)

It's also important to implement clinician review workflows where humans validate AI outputs and access logs in real-time, catching unauthorized disclosures early via attribute-based controls and audit trails. Finally, you can establish governance policies for ongoing monitoring, including regular bias checks and incident response drills, ensuring ethical frameworks bridge compliance gaps until tech evolves.

Compliance will always be important, but that is - really - the bare minimum. As AI Governance professionals we must stay ahead of the curve and prognosticate with our ethics training and privacy certifications to see what the legislators cannot.